The Algorithmic Gatekeepers: A Comprehensive Analysis of YouTube AI Monetization Rules and Spotify Music Policy (2025)

1. Executive Summary: The Authenticity Paradigm

The digital content landscape of 2025 stands at a critical inflection point, characterized by a fundamental tension between the exponential scalability of generative artificial intelligence (GenAI) and the human-centric imperatives of platform integrity. This report provides an exhaustive, expert-level analysis of the regulatory frameworks recently instituted by the world’s two dominant media platforms: YouTube and Spotify. As we navigate the latter half of the decade, the era of “permissive growth” has definitively ended, replaced by an era of “verified authenticity.”

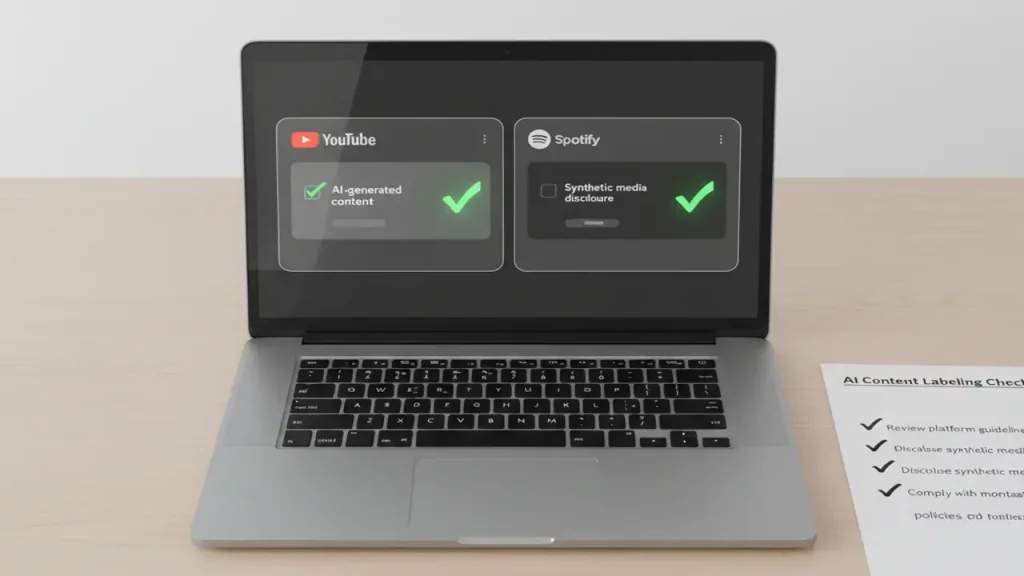

For creators, rights holders, and digital strategists, understanding the YouTube AI monetization rules and Spotify’s evolving music policies is no longer a matter of optimization—it is a matter of survival. The July 15, 2025 update to the YouTube Partner Program (YPP) and Spotify’s September 2025 “Spam Filtering” protocols represent the most aggressive intervention in the creator economy since the inception of algorithmic recommendation.

The central thesis of this report is that platforms are transitioning from reactive moderation (removing bad content) to proactive algorithmic filtering (deprioritizing inauthentic content). We observe a distinct shift away from engagement metrics (views, clicks) as the sole arbiter of monetization eligibility. Instead, a new variable has been introduced: “Human Value-Add.” The data indicates that both YouTube and Spotify are leveraging advanced metadata standards, such as the C2PA and DDEX frameworks, to create a bifurcated internet where “human-verified” content enjoys premium monetization, while “synthetic mass-production” is relegated to a non-monetizable, low-visibility tier.

This document details the mechanics of these policies, offering actionable insights into compliance, the technical requirements of metadata disclosure, and the necessary infrastructure upgrades—specifically the shift toward local AI processing on high-performance hardware—required to navigate this new environment.

Curious how AI is changing not just content platforms but real life at home? In our deep dive on AI home caregiver robots and remote avatar nannies, we explore how robots can watch over family members while you work or create. Read it here: https://aiinovationhub.com/ai-home-caregiver-robots-remote-avatar-nanny/ for a practical, human-centered perspective.

2. YouTube AI Monetization Rules: The July 2025 Overhaul

The evolution of the YouTube Partner Program (YPP) has historically been a series of responses to existential threats. In 2017, the “Adpocalypse” forced the platform to tighten entry requirements to protect advertisers from extremist content. In 2025, the threat is different: it is the dilution of the platform’s value proposition by an infinite supply of AI-generated “slop.”

2.1 The Historical Context of the “Authenticity” Mandate

To understand the severity of the 2025 updates, one must contextualize them within YouTube’s broader mission. The platform’s stated goal is to “give everyone a voice”. However, the democratization of content creation via GenAI tools—where a single user can script, voice, and edit 50 videos a day—threatened to drown out legitimate human voices. By early 2025, YouTube’s internal metrics likely showed a disturbing trend: a spike in server costs associated with hosting petabytes of low-retention, machine-generated video, coupled with a decline in “session quality” as users swiped past endless iterations of identical AI content. The July 15, 2025 policy update was the inevitable immune response.

2.2 Analyzing the July 15, 2025 Policy Update

On July 15, 2025, YouTube implemented a revision to the YPP eligibility criteria that specifically redefined “inauthentic content.” While previous policies banned “repetitious” content, the new language is explicitly calibrated to detect the fingerprints of automation.

2.2.1 Defining “Mass-Produced” Content

The core of the new policy is the restriction on “mass-produced” content. This term, once vague, has been sharpened by enforcement precedents observed throughout late 2025.

- Velocity as a Signal: YouTube’s algorithms now analyze the velocity of uploads relative to the complexity of the content. A channel uploading five 10-minute documentaries per day is flagged for review. The system infers that no human production team could achieve this output without significant automated shortcuts that bypass editorial oversight.

- Template Detection: The policy targets “templatized” videos. This refers to content where the visual cadence, script structure, and audio mixing are mathematically identical across hundreds of videos, with only the variables (e.g., the name of the celebrity or the Reddit thread being read) changing.

- Programmatic Generation: The update targets “content farms”—operations that use API-driven workflows to scrape the web (e.g., Wikipedia articles, news feeds) and auto-convert text to video without human intervention. The platform has deemed this “low-effort,” stripping it of monetization eligibility regardless of view count.

Insight: The policy does not ban high volume; it bans low-effort volume. A news channel like CNN can upload 50 times a day because the content is distinct. A “Cash Cow” channel uploading 50 “Facts About Dogs” videos using the same stock footage library and TTS voice will be demonetized.

2.2.2 The “Repetitious” Content Clause

“Repetitious” content is now defined not just by the video file, but by the viewer’s experience. If a viewer watches three videos from a channel and cannot distinguish them apart from the title, the channel is in violation.

- Synth-Voice Overlap: A critical, often overlooked factor is the “Voiceprint.” If a channel uses a standard, non-finetuned AI voice (e.g., a default ElevenLabs or OpenAI voice) that is also used by 50,000 other channels, YouTube’s classifiers may de-rank the content as “generic.” Authenticity now extends to the sonic identity of the channel.

2.3 The “Educational, Documentary, Scientific, or Artistic” (EDSA) Exception

Despite the crackdown, YouTube remains a haven for AI-assisted creativity, provided it falls under the EDSA context. The platform explicitly states that exceptions are made for content with clear educational or artistic value.

- The Transformative Threshold: To qualify for the EDSA exception, AI content must be “transformative.” A video using AI visuals to illustrate a complex physics concept (which cannot be filmed) is monetizable. A video using AI visuals to merely fill screen time while a robot voice reads a copied script is not.

- Commentary is King: The most reliable way to secure monetization in 2025 is through original human commentary. Reaction channels, often targeted for “reused content,” can survive if the reactor provides substantial critical analysis. Similarly, AI channels that feature a human host (or a highly customized, unique VTuber avatar) introducing and analyzing the AI generations are safer than “faceless” autogenerated streams.

AI Content Monetization Policy Changes (2025)

| Feature | Pre-2025 Policy Status | 2025 Policy Status | Technical Reason for Change |

|---|---|---|---|

| Stock Footage Compilations | Gray Area | Demonetized | Considered "**Mass-Produced**" if unedited, failing the originality test. |

| AI Text-to-Speech (Generic) | Allowed | High Risk | Flagged as "**Repetitious**" audio signature due to lack of inflection and unique characteristics. |

| AI Text-to-Speech (Custom) | Allowed | Allowed | Unique voiceprint indicates high effort and clear separation from generic TTS tools. |

| Script-to-Video Automation | Allowed | Banned | Lacks "**Human Editorial**" input, violating policy on minimal transformation. |

| AI Visuals (Labelled) | Allowed | Allowed | Compliance with Disclosure Policy is sufficient; effort is recognized if labeled. |

2.4 The Enforcement Mechanism: Demonetization vs. Removal

The consequences of violating the YouTube AI monetization rules vary.

- Demonetization: The primary penalty is removal from the YPP. Ads are disabled, and the creator loses access to features like Super Chat and Channel Memberships.

- Shadowbanning (Discovery Reduction): A more subtle penalty is the removal of the channel from the "Recommended" algorithm. The videos remain playable but stop accruing views. This is often the fate of "slop" channels—they aren't banned, they are simply ignored by the distribution engine.

- Suspension: Outright channel termination is reserved for severe violations, such as using deepfakes to impersonate public figures or generating non-consensual sexual imagery.

3. The Disclosure Framework: YouTube's "Altered or Synthetic" Label

If the first pillar of the 2025 policy is "Quality," the second is "Transparency." YouTube has recognized that it cannot (and should not) detect all AI content. Instead, it has shifted the burden of truth to the creator through a mandatory disclosure system.

3.1 The Three Tiers of Disclosure

The policy introduced in late 2024 and solidified in 2025 categorizes AI usage into three tiers, each with different obligations.

3.1.1 Tier 1: Mandatory Disclosure (The "Reality Risk")

Creators must check the "Altered or Synthetic" box in YouTube Studio if the content meets specific criteria regarding realism. This is not a request; it is a Terms of Service requirement.

- Realistic Depiction of Events: If a video uses AI to show a real place (e.g., Times Square) engaging in an event that never happened (e.g., a massive flood), it requires disclosure. This prevents AI from being used to fabricate news or incite panic.

- Realistic Depiction of Persons: Any content that makes a real person appear to say or do something they did not constitutes a mandatory disclosure. This applies even to "benign" uses, such as making a historical figure speak, if the depiction is photorealistic.

- Voice Synthesis: Using an AI model to replicate the voice of a real individual (narrator, politician, celebrity) requires a label.

3.1.2 Tier 2: Exemptions (The "Fantasy/Productivity" Clause)

Crucially, YouTube does not want to label everything. Over-labeling leads to "warning fatigue," where viewers ignore the disclosures. Therefore, specific uses are exempt:

- Clearly Unrealistic Content: A video of a person riding a dragon, or an anime-style animation generated by AI, does not need a label. The "fictional nature" is self-evident to a reasonable viewer.

- Productivity & Scripting: Using ChatGPT to write a script, or using AI to organize a shot list, does not require disclosure. The disclosure applies to the media assets (pixels and sound waves), not the ideas.

- Aesthetic Edits: Beauty filters, color grading AI, and background blur (even if AI-generated) are exempt, as they do not fundamentally alter the "truth" of the scene.

3.1.3 Tier 3: Platform-Injected Labels

For content created using YouTube’s proprietary tools (like Dream Screen for Shorts), the platform automatically applies the label. The metadata is watermarked at the point of creation, ensuring that even if the user attempts to hide it, the player will display "Made with AI".

3.2 The User Interface and Viewer Experience

The disclosure manifests in two ways:

- Description Panel: For most content, a small notice appears in the expanded description box: "Sound or visuals were significantly altered or generated by AI."

- Video Player Overlay: For sensitive topics (health, news, elections), the label is promoted to a prominent overlay on the video player itself. This ensures that a viewer scrolling through a feed sees the context before consuming the misinformation.

Strategic Insight: Creators should view the label not as a "mark of shame" but as a "trust signal." Early data suggests that audiences are more forgiving of labeled AI content than they are of "stealth" AI content that is later exposed. Transparency builds long-term community trust, which is the foundation of monetization.

3.3 The Risk of Non-Disclosure

YouTube has implemented "passive detection" systems. If a creator repeatedly uploads realistic AI content without the label, and YouTube’s internal classifiers detect it, the platform typically issues a warning. However, persistent non-compliance leads to penalties. The most significant is visibility reduction. YouTube has explicitly stated that undisclosed AI content may be demoted in search rankings, effectively killing the video’s potential to go viral.

4. Rights Management 2.0: The Deepfake Defense

In 2025, the conversation around AI moved from "misinformation" to "identity theft." The proliferation of "AI Covers"—where users utilize models like RVC (Retrieval-based Voice Conversion) to make famous artists "sing" other songs—forced YouTube to upgrade its Content ID infrastructure.

4.1 Synthetic Singing Identification

YouTube’s Content ID system, the most sophisticated copyright management tool on the internet, was updated to recognize synthetic vocal prints.

- Mechanism: Partners (labels and artists) can ingest a "vocal model" into the Content ID database. The system then scans user uploads not just for melody matches (the old standard) but for timbre matches.

- Monetization Diversion: If a user uploads a track featuring an unauthorized "AI Taylor Swift," the system flags it. The rights holder can then choose to block the video or, more strategically, claim the monetization. This turns the wave of AI covers into a revenue stream for the original artist, echoing the early days of user-generated content (UGC) music policies.

4.2 Face Management Technology

Beyond audio, YouTube introduced "Likeness Management." This allows individuals (actors, creators) to register their facial geometry. The system scans the platform for deepfakes. This is a critical safety tool, particularly for female creators who have been disproportionately targeted by non-consensual deepfake pornography. The technology allows for rapid, automated takedowns of such content, bypassing the slower manual reporting forms of the past.

5. Spotify’s Algorithmic Firewall: The September 2025 Policies

While YouTube battles for visual authenticity, Spotify is engaged in a war against Artificial Streaming and Catalog Dilution. The barrier to entry for music creation has collapsed; anyone with a laptop can generate 1,000 "lo-fi" tracks in an hour. This created a crisis: the royalty pool (a finite amount of money paid out to artists) was being drained by bots and noise. In September 2025, Spotify responded with a suite of aggressive policies designed to "clean the stream".

5.1 The 75 Million Track Purge

The scale of the problem was revealed when Spotify announced it had removed 75 million spam tracks in the preceding 12 months. To put this in perspective, this number exceeds the entire catalog of music that existed on the platform just a decade prior. These tracks were largely "functional spam"—generic ambient loops, slightly altered duplicates of existing songs, and "sound-alike" tracks designed to trick users. The 2025 purge signaled that Spotify is no longer a neutral host; it is an active curator. The introduction of the Music Spam Filter in late 2025 automates this process, using audio analysis to reject "slop" at the upload stage before it ever reaches the listener.

5.2 The Economics of "Functional Noise"

A major loophole in the streaming economy was "Functional Noise"—white noise, rain sounds, and fan static. Because Spotify pays per stream, a 31-second track of white noise earned the same royalty as a 31-second excerpt of a complex symphony. Spammers exploited this by uploading albums with hundreds of short noise tracks.

- The 2-Minute Rule: As of 2025, functional noise tracks must be at least two minutes long to be eligible for any monetization. This destroys the "track splitting" strategy, reducing the potential revenue of a noise album by 75%.

- Valuation Adjustment: Furthermore, Spotify has re-weighted the royalty calculation. A stream of "noise" is now valued at a fraction of the value of a "music" stream. This effectively reallocates money from "passive listening" (sleep sounds) to "active artistry" (songs).

5.3 The 1,000 Stream Threshold

Perhaps the most controversial change is the 1,000 stream threshold. A track must generate at least 1,000 streams in a 12-month period to trigger a payout.

- The Logic: This policy is a direct attack on the "Long Tail of Garbage." AI content farms often operate by uploading millions of tracks, expecting each to get only a few dozen streams. Individually, they are worthless; collectively, they siphoned millions of dollars. The 1,000 stream threshold renders this strategy non-viable. If a track cannot find a genuine audience of at least ~100 people (assuming 10 listens each), Spotify deems it "non-monetizable inventory."

- Impact on Indie Artists: While intended to stop bots, this policy also affects genuine micro-indie artists. It forces creators to focus on marketing and community building rather than just distribution.

5.4 Artificial Streaming Fines

Spotify has moved from passive defense to financial offense. The platform now charges distributors a penalty fee for every track flagged for flagrant artificial streaming.

- The Ripple Effect: This policy forced distributors (like DistroKid, TuneCore, CD Baby) to become the platform's police force. Distributors now aggressively ban accounts that show signs of bot activity (e.g., 10,000 streams from a single IP address) because they cannot afford to pay Spotify’s fines. For the AI creator, this means that using a "view bot" or "stream farm" service is no longer just a risk of ban—it is a guarantee of immediate termination by the distributor.

6. The Metadata Standard: DDEX and the Future of Labeling

Both YouTube and Spotify are converging on a single technical solution: Metadata. The days of "trust me, it's real" are over. The industry is adopting the DDEX (Digital Data Exchange) standards to encode the provenance of media.

6.1 Understanding DDEX and RIN

DDEX is the consortium that sets the standards for how digital music supply chains communicate. The new Recording Information Notification (RIN) standards include specific fields for AI participation.

- Granular Attribution: The metadata allows for nuanced distinctions. It is not just "AI: Yes/No." It includes fields for:

- AI-Generated Composition: (The melody/chords were AI).

- AI-Generated Performance: (The singer/instrumentalist is AI).

- AI-Assisted Post-Production: (Mixing/Mastering was AI).

- Implementation: Spotify has begun ingesting this data. While currently used for internal sorting and rights management, the roadmap suggests this will power user-facing features. We anticipate "Human Only" playlists or filters that allow purists to block AI vocals.

6.2 C2PA and the Content Credentials

Parallel to DDEX in music, the video world (YouTube) is adopting C2PA (Coalition for Content Provenance and Authenticity). This is an open technical standard that allows publishers to embed tamper-evident metadata in files.

- The Digital Watermark: When a creator uses a tool like Adobe Firefly or YouTube Dream Screen, a C2PA manifest is attached to the file. This manifest travels with the video. When uploaded to YouTube, the player reads this manifest and automatically triggers the "Altered or Synthetic" label.

- The Compliance Gap: Currently, many open-source AI tools (like local Stable Diffusion installations) do not automatically attach C2PA data. This creates a compliance gap where creators using "pro" tools must manually disclose, while those using "consumer" tools are automatically tagged.

7. The Impersonation Crisis: "Ghostwriters" and Deepfakes

The "Ghostwriter" incident of 2023—where an AI song mimicking Drake and The Weeknd went viral—served as the catalyst for the strict impersonation policies we see in 2025.

7.1 Spotify’s Impersonation Ban

Spotify’s 2025 policy is explicit: Unauthorized vocal impersonation is prohibited.

- Clarification: Using AI to create a new singer (e.g., "Hatsune Miku 2.0") is allowed. Using AI to mimic Beyoncé without her permission is banned.

- Enforcement: Spotify has invested in "Content Mismatch" technology. This system analyzes the audio fingerprint of uploads. If a track is tagged as "Artist: Unknown" but contains vocals that match the spectral footprint of "Artist: Drake," it is flagged for manual review.

7.2 The Role of Authorized AI

Interestingly, both platforms have left the door open for authorized AI.

- YouTube’s Dream Track: YouTube is experimenting with tools that allow creators to use licensed AI voices of participating artists (e.g., Charlie Puth, Sia) for Shorts. This creates a legal, monetized framework for AI music.

- The Future Business Model: We are moving toward a world where an artist’s "AI Voice Model" is a licensable asset, distinct from their discography. Creators will pay a subscription to use "Official Taylor Swift AI" for their background music, with royalties flowing back to the artist.

8. Infrastructure for the AI-Native Creator: A Hardware Imperative

The stringent policies of 2025 create a paradox: Creators need AI to compete (for efficiency), but they must avoid the "mass-produced" look of cloud-based AI tools to survive monetization filters. The solution lies in Local AI Processing. By running open-source models (like LLaMA 4, Stable Diffusion XL Turbo, or RVC) locally on their own hardware, creators can fine-tune outputs to be unique. A cloud API gives the same generic output to millions of users (triggering "repetitious content" filters). A locally fine-tuned model, trained on the creator’s own previous scripts and voice, produces content that is fingerprint-unique.

8.1 The Hardware Bottleneck

Running these models requires significant computational power, specifically VRAM (Video RAM) on the GPU. The standard 8GB VRAM found in consumer laptops is often insufficient for advanced 2025 workflows involving video generation or large-batch image processing. However, professional workstations from brands like Dell or Apple can cost upwards of $4,000—a barrier for many indie creators.

8.2 Strategic Resource: The Rise of Chinese OEMs

In response to this cost barrier, a growing segment of the creator economy is turning to high-performance laptops from Chinese OEMs. These devices often utilize the same silicon (NVIDIA RTX 40-series and 50-series mobile chips) as Western brands but undercut them on price by 30-40% due to supply chain proximity and lower marketing overheads.

CTA: Optimizing Your Studio with LaptopChina.tech For creators looking to upgrade their infrastructure to handle local AI workloads without compromising their budget, laptopchina.tech has emerged as a critical resource.

- Why it Matters: The site offers in-depth reviews of models from manufacturers like Xiaomi, Mechrevo, and Hasee—brands that are often overlooked in the West but offer "performance-per-dollar" ratios that are essential for the AI age.

- The AI Advantage: Reviews on laptopchina.tech specifically benchmark thermal performance during sustained loads (like rendering a 4K AI video). This intelligence is vital, as thermal throttling is the enemy of productivity.

- Recommendation: We strongly advise creators to consult these reviews to find laptops with at least 12GB or 16GB of VRAM. This hardware specification allows for the local execution of "uncensored" and "custom" AI models, granting the creator total control over their creative output and ensuring the "uniqueness" required by YouTube’s new policies.

- Subscribe: Staying updated with laptopchina.tech ensures you are the first to know about new hardware releases that can give you a competitive edge in content production speed and quality.

9. Strategic Conclusions and Future Outlook (2026)

As we look toward 2026, the trajectory is clear: The internet is segregating into "Verified Human" and "Unverified Synthetic" zones.

9.1 The "Blue Check" for Content

We predict that YPP and Spotify for Artists will eventually require biometric verification (e.g., World ID or government ID) to unlock the highest tiers of monetization. This will be the ultimate filter against bot farms.

9.2 The Premium on Raw Reality

Counter-intuitively, the rise of AI will increase the value of "low-fi" reality. Vlogs, livestreams, and acoustic recordings—formats that are difficult to fake convincingly in real-time—will see their CPMs rise. Advertisers will pay a premium to be associated with "proven reality."

9.3 Actionable Checklist for Creators

To thrive in this environment, creators must adopt a "Compliance-First" mindset:

- Audit Your Catalog: Review all existing content. If you have "slop" or low-effort AI videos, consider private-ing them to avoid a channel-wide "repetitious content" flag.

- Disclose Proactively: Use the YouTube "Altered/Synthetic" tool. It is better to be labeled and monetized than unlabeled and shadowbanned.

- Humanize the Loop: Never upload raw AI output. Always add a human layer—editorial cuts, your own voice, or significant visual transformative work.

- Upgrade Infrastructure: Move away from generic cloud tools. Invest in hardware (researched via laptopchina.tech) to run custom, local AI models that define your unique style.

- Respect the Metadata: If you are a musician, ensure your distributor is filling out the RIN/DDEX fields correctly. Transparency is the currency of the future.

The era of "easy AI money" is over. The "YouTube AI monetization rules" and Spotify’s 2025 policies are the new laws of the land. They reward the architects of AI content, not the consumers of it. By understanding these rules, creators can transition from being at risk of replacement to being masters of the new medium.

Appendix: Data & Policy Reference Tables

Table 1: YouTube AI Policy Enforcement Matrix (July 2025 Update)

AI Content Risk, Disclosure, and Monetization Policy

| Content Category | Risk Level | Disclosure Required? | Monetization Status (YPP) |

|---|---|---|---|

| AI-Assisted Scripting | Low | No | Eligible (Productivity Exception) |

| AI Voice Cloning (Authorized) | Medium | Yes (Label) | Eligible (If original rights cleared) |

| AI Voice Cloning (Unauthorized) | High | Yes (Label) | Demonetized / Removed |

| AI Visuals (Abstract/Fantasy) | Low | No | Eligible (No label needed) |

| AI Visuals (Realistic Events) | High | Yes (Label) | Eligible (With mandatory label) |

| Mass-Produced AI Compilations | Extreme | N/A | Ineligible (Repetitious Content Policy) |

Table 2: Spotify Royalty Eligibility Thresholds (Sept 2025 Update)

Music Streaming Policy Changes: Algorithmic Noise/Ambient Content

| Metric | Requirement | Policy Rationale |

|---|---|---|

| Minimum Streams | 1,000 per 12 months | Eliminates "**long-tail**" spam profitability (tracks that generate negligible royalties). |

| Functional Noise Length | Minimum 2 Minutes | Prevents "**track-splitting**" abuse, where short tracks are used to inflate stream counts. |

| Noise Royalty Rate | Reduced (Fractional) | Prioritizes musical artistry over static noise content in the royalty pool distribution. |

| Artificial Streaming | Zero Tolerance | **Fines levied** against distributors for manipulation and bot violations. |

| AI Metadata (RIN) | Mandatory | Ensures transparency for rights holders and compliance agencies regarding AI involvement. |

This report aggregates analysis from official platform documentation including YouTube's "Our Policies" , Spotify's "Newsroom" , and technical standards from DDEX.

YouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rules

YouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rules

YouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rules

YouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rules

YouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rules

YouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rulesYouTube AI monetization rules

Related

Discover more from AI Innovation Hub

Subscribe to get the latest posts sent to your email.